Software Penetration Testing Explained: Scope, Methods and UK Buyer's Guide

Software penetration testing is a hands-on assessment of a software product's security. It's not about scanning a network perimeter for open ports. Instead, it focuses on the application layer: how users authenticate, how data flows between components, and where business logic can be subverted. For product teams, SaaS companies, and organisations building customer-facing platforms, it answers a straightforward question. Can a skilled attacker compromise this software, and what would the impact be?

Despite that, only 11% of UK businesses reported conducting formal penetration testing in 2024, according to the government's Cyber Security Breaches Survey. The gap usually comes down to uncertainty about what to ask for, how to scope it, and what good looks like. This guide covers the practical detail so you can brief a provider with confidence and get meaningful results.

What software penetration testing means

Software penetration testing targets an application and its supporting components. The tester's goal is to find exploitable vulnerabilities by simulating realistic attack techniques against the software, not just its hosting infrastructure.

That makes it different from network penetration testing, which focuses on firewalls, switches, exposed services, and host-level misconfigurations. A network test might tell you a server is running an outdated SSH version. A software test tells you whether an authenticated user can escalate privileges, bypass payment logic, or extract another customer's data through a manipulated API call.

Most modern products need both. But if you're building or buying software, the application-layer test is where the highest-impact findings tend to surface. For more on one of the most common subsets, see our guide to web application penetration testing.

Common scope components

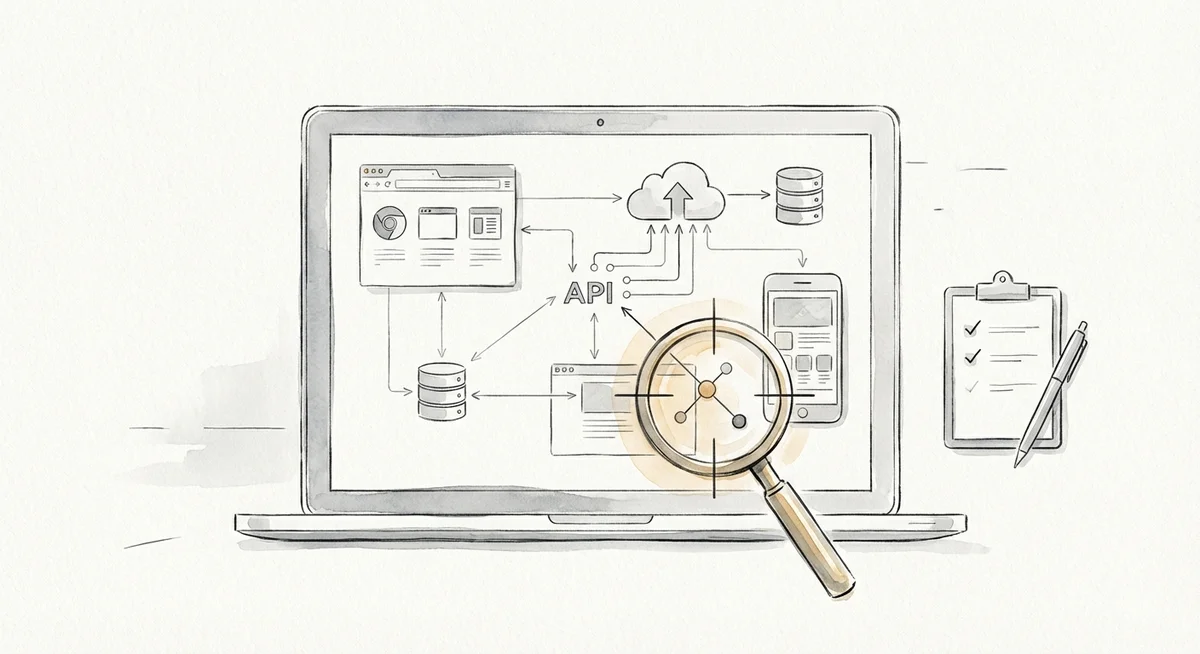

A software penetration test rarely covers just one thing. Most products are a collection of interconnected components, and the scope should reflect that.

Web front ends such as customer portals, admin panels, dashboards, and single-page applications are almost always included. So are APIs, both public-facing and internal, which handle data exchange between services. API testing is often where critical access control issues surface. We cover this in more detail in our API security testing guide.

Mobile applications for iOS and Android may be in scope too, including local data storage, certificate pinning, and communication with back-end services. Thick clients (desktop applications running natively on Windows, macOS, or Linux) sometimes feature, particularly where there's local processing alongside server-side communication.

Beyond the application itself, testers often look at authentication and authorisation flows like SSO integrations, OAuth implementations, MFA, session management, and role-based access controls. Third-party integrations such as payment gateways, identity providers, and webhooks may be relevant. Cloud dependencies like storage buckets, serverless functions, and container configurations sometimes fall in scope where they directly support the application. In some engagements, even the CI/CD pipeline is tested, particularly where supply chain risk is a concern.

Not every engagement covers all of these. The point is to map the components that matter for your product and agree explicitly on what's in and out of scope.

How a software engagement is planned

Good planning is the difference between a useful engagement and an expensive checkbox exercise.

Goals and threat model

Start with what you want to learn. Are you testing before a product launch? Responding to a customer security questionnaire? Validating fixes after an incident? The goal shapes how the tester allocates time and which attack scenarios they prioritise.

A brief threat model, even an informal one, helps focus effort. For a B2B SaaS product, the most relevant threats might be tenant isolation bypass and privilege escalation. For a consumer app, account takeover and data leakage may take priority.

Environments and test accounts

Most software tests run against a staging or pre-production environment that mirrors production as closely as possible. Testing in production is sometimes necessary but introduces risk of disruption, so agree on this explicitly.

Provide the tester with representative test accounts at each privilege level (standard user, administrator, read-only, etc.). This lets them assess vertical and horizontal access control without spending days on credential guessing.

Data handling and exclusions

Clarify how test data will be handled, especially if the environment contains personal data subject to UK GDPR. Agree on exclusions: denial-of-service testing, third-party services you don't own, specific endpoints that could trigger irreversible actions.

Success criteria

Define what a successful engagement looks like before it starts. That might mean coverage of all OWASP Top 10 categories, testing of all user roles, or confirmation that previously reported issues have been remediated. Without clear criteria, it's difficult to evaluate the results.

Methodology and standards

A credible software penetration test follows a recognised methodology rather than ad hoc poking. Most UK providers structure their work around a cycle of reconnaissance, analysis, exploitation, and post-test clean-up.

OWASP alignment

The OWASP Web Security Testing Guide (WSTG) is the most widely referenced framework for application-layer testing. It provides a structured checklist covering input validation, session management, error handling, cryptography, and business logic. Testers may also draw on the OWASP Mobile Application Security Testing Guide (MASTG) for mobile engagements or the OWASP API Security Top 10 for API-focused work.

Alignment with OWASP doesn't mean every test is identical. A good tester adapts based on the target's architecture and risk profile, using the framework as a baseline rather than a rigid script.

Quality signals: CREST and CHECK

In the UK, two quality signals come up regularly. CREST is an international accreditation body for penetration testing companies. Member firms employ individually certified testers and follow documented methodologies. It's the most common commercial benchmark. CHECK is a scheme overseen by the National Cyber Security Centre (NCSC), primarily used for government and public sector systems. CHECK-approved testers hold specific NCSC-recognised certifications.

Neither guarantees a flawless test, but both indicate the provider meets a verifiable standard of competence and process.

Retesting and evidence handling

A thorough engagement includes retesting after findings have been addressed. This confirms fixes are effective and haven't introduced new issues. Agree upfront whether retesting is included in the original price or charged separately, and set a reasonable window, typically 30 to 90 days after the initial report.

All evidence (screenshots, request/response logs, proof-of-concept scripts) should be handled securely and destroyed or returned at an agreed point after the engagement.

What deliverables should look like

The report is the primary output, and its quality determines how useful the engagement actually is.

You should expect an executive summary written for non-technical stakeholders: overall risk posture, the most significant findings, and any areas that couldn't be tested. Each vulnerability should be a prioritised finding with a clear title, risk rating (typically CVSS or comparable), affected component, and business impact context.

The findings that matter most need exploit narratives: step-by-step descriptions showing how each vulnerability was identified and exploited, reproducible by your development team rather than vague summaries. Remediation guidance should be specific and actionable, suggesting how to fix an issue, not just flagging it. Once remediation is complete, a retest confirmation should document which issues have been resolved and which remain open.

Be cautious of reports that list hundreds of findings without context. A long list of low-severity items from automated scanning does not constitute a penetration test report.

When to test

Timing matters. Testing before a major launch or release catches issues when they're cheapest to fix. Significant architectural changes like new authentication systems, API redesigns, or cloud migrations all warrant fresh testing. Large buyers increasingly require evidence of independent security testing as part of procurement, so enterprise sales cycles often trigger engagements. Inheriting a codebase through acquisition is another common reason.

For ongoing assurance, annual testing is a common baseline. Organisations with frequent release cycles may test quarterly or integrate testing into their development pipeline.

Testing only once and treating the report as permanently valid is a common mistake. Software changes, threats evolve, and yesterday's clean result doesn't cover today's new feature.

Cost and effort drivers

UK pricing for software penetration testing is typically a day rate multiplied by the number of testing days. Current market rates for experienced, accredited teams generally fall between £1,000 and £2,000 per day.

Total costs vary significantly. A small web application with limited functionality might cost £2,500 to £6,000. A mid-complexity SaaS product with multiple user roles and API integrations often falls in the £8,000 to £15,000 range. Complex enterprise environments with mobile clients, thick clients, and extensive integrations can exceed £25,000. For a more detailed breakdown, see our guide on how much a pen test should cost.

The main factors driving the number of days required: number of user roles and privilege levels, number and complexity of API endpoints, whether mobile or thick-client components are in scope, authentication complexity (SSO, MFA, federated identity), the testing approach (black-box, grey-box, or white-box), and retesting requirements.

Be wary of quotes that arrive without detailed scoping questions. If a provider offers a fixed price before understanding your application, you're likely buying a lightweight automated scan rather than a genuine penetration test.

How to compare providers and write a useful scope

Comparing providers

When evaluating firms, look at their accreditations (CREST or CHECK, depending on your sector) and whether they have relevant experience with products like yours. Ask for anonymised case studies or references. Find out whether they'll name the individuals performing the work and share their qualifications. A good provider explains their methodology before you sign, not after. Request a redacted sample report to judge quality and depth. Confirm whether remediation retesting is included, and ask how critical findings are communicated during the engagement. A provider that waits until the final report to disclose a severe vulnerability isn't serving you well.

Writing a useful scope document

A well-written scope document saves time for both sides and produces more comparable proposals. Include a brief description of the product and its purpose, the technology stack (languages, frameworks, hosting platform), a list of in-scope components (URLs, API documentation, mobile app versions), user roles and access levels with a note on whether test accounts will be provided, the test environment and any restrictions, compliance or regulatory drivers (ISO 27001 audit preparation, PCI DSS, etc.), desired timeline and hard deadlines, and expected deliverables and reporting format.

The more specific your brief, the more useful the proposals you receive.

Common misunderstandings

Vulnerability scanning is not penetration testing

A vulnerability scan is an automated tool that checks for known issues against a database of signatures. It's fast, repeatable, and useful as a hygiene check, but it doesn't replicate how an attacker thinks. It can't test business logic, chain multiple low-severity issues into a high-impact attack, or assess whether access controls are enforced correctly.

A penetration test uses manual techniques, creative reasoning, and real exploitation to find issues that scanners miss. If your provider's methodology is entirely automated, you're paying for a scan, not a test.

A clean report does not mean the software is secure

A penetration test is a point-in-time assessment conducted within a defined scope and timeframe. No critical findings means the tester didn't find exploitable issues within those constraints, not that none exist. This is why recurring testing and good development practices matter alongside periodic assessments.

Compliance does not equal security

Passing a compliance requirement like Cyber Essentials or an ISO 27001 audit is valuable, but these frameworks set a baseline. A penetration test scoped purely to tick a compliance box may miss application-specific risks that fall outside the framework's checklist. Aim for testing that reflects your actual threat landscape, not just the minimum a standard requires.

More findings do not always mean worse security

A report with 40 findings isn't necessarily worse than one with five. What matters is severity, exploitability, and business impact. A single critical authentication bypass is more significant than dozens of informational observations.