Social Engineering Penetration Testing: UK Guide for 2026

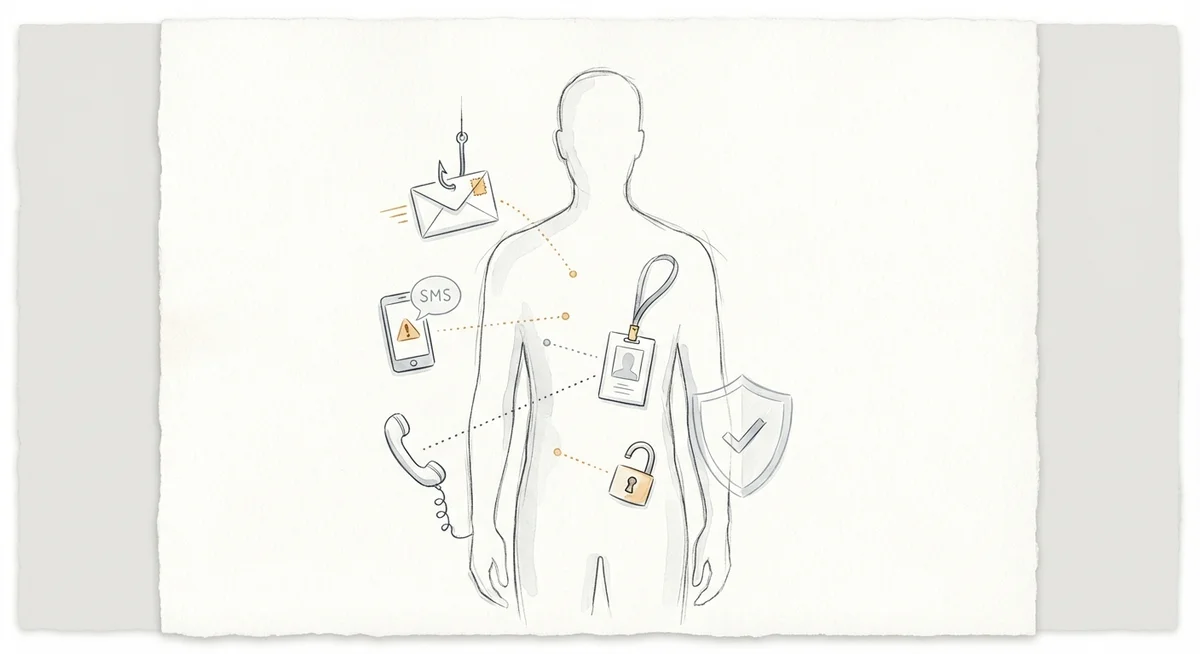

Social engineering penetration testing simulates attacks that target people rather than infrastructure. The goal is to find weaknesses in how an organisation detects, resists, and reports manipulation across email, phone, SMS, and physical access. Human factors are still involved in roughly 60% of breaches according to recent industry data. Testing the human layer is no longer optional for UK organisations that take security seriously.

But social engineering testing is frequently misunderstood. It is not a tool for catching people out or building a list of employees to blame. Done well, it gives you evidence-based insight into gaps across people, processes, and technology, and feeds directly into stronger awareness programmes and technical controls. This guide covers how to plan, scope, run, and act on social engineering tests in a UK context.

What Social Engineering Testing Is (and Is Not)

Social engineering penetration testing is a controlled, authorised simulation of tactics that real attackers use to manipulate people. Testers try to persuade staff to take actions like clicking a link, sharing credentials, granting physical access, or divulging sensitive information, using the same psychological techniques seen in genuine attacks.

It is not a compliance tick-box exercise with no follow-through. It is not a way to single out or discipline individuals. It does not replace security awareness training (it complements it). And it is never an excuse to use genuinely harmful or distressing pretexts.

If you are new to penetration testing more broadly, social engineering testing sits alongside network, application, and infrastructure assessments as one layer of a broader security programme.

Common Social Engineering Scenarios

Phishing

Simulated malicious emails designed to steal credentials or get users to open attachments. In 2026, phishing simulations increasingly mirror the sophistication of real campaigns, using personalised lures, cloned branding, and realistic landing pages. Phishing remains the most commonly tested vector because email is still the primary way attackers get initial access.

Vishing

Voice-based attacks over the phone. Testers may impersonate IT support, a supplier, or a senior colleague to extract information or prompt an action. AI-generated voice cloning has made vishing scenarios more realistic and more important to test against.

Smishing

SMS-based social engineering targeting mobile devices. These tests evaluate whether staff treat text messages with the same caution as emails, particularly when messages appear to come from internal systems or delivery services.

Pretexting

A fabricated but plausible scenario designed to build trust and extract information over time. A tester might pose as a new starter needing access credentials, or a supplier chasing an overdue invoice. Pretexting often underpins phishing, vishing, and physical scenarios rather than standing alone.

Physical Social Engineering

Attempting to gain unauthorised physical access to offices, server rooms, or secure areas. Techniques include tailgating through access-controlled doors, impersonating delivery personnel, or using cloned access badges. Physical tests carry additional safety considerations and typically require tighter scoping.

Defining Scope and Rules of Engagement

A clear, written Rules of Engagement (RoE) document is the foundation of any safe and useful test. Without it, you risk operational disruption, legal exposure, and a loss of trust with staff.

Your RoE should cover:

- Channels in scope. Which vectors will be tested: email, phone, SMS, physical, or a combination?

- Target lists. Will the test cover all staff, specific departments, or particular roles? Are contractors and third parties included?

- Geographic limits and timing constraints. Are all office locations in scope? Are there blackout periods (month-end close, major product launches) where testing could cause disproportionate disruption?

- Exclusions. Critical operational systems, emergency services numbers, and high-value financial transaction windows should typically be excluded.

- Safety controls. A defined "kill switch" allowing immediate halt of activity, plus a single point of contact (SPOC) with authority to pause or stop the test at any time.

- Data handling. How captured credentials or personal data will be stored, who can access it, and when it will be destroyed.

Before any engagement begins, make sure you have reviewed the key steps to take before hiring a penetration testing company. Many of the same principles around scoping and authorisation apply.

Legal and Ethical Guardrails in the UK

Social engineering testing intersects with several areas of UK law. This guide does not constitute legal advice, but the following considerations are important to discuss with your legal counsel and Data Protection Officer (DPO) before testing begins.

Computer Misuse Act 1990. Explicit, written authorisation from system owners is essential. Without it, even simulated attacks could expose testers and the commissioning organisation to criminal liability.

UK GDPR and Data Protection Act 2018. Testing may involve collecting personal data (credentials entered into a simulated phishing page, for example). The ICO expects organisations to minimise the personal data collected, handle it securely, and have a lawful basis for processing it. The ICO's own guidance on phishing highlights the importance of organisations learning from common attack patterns.

Fraud Act 2006. Pretexting scenarios involving impersonation should be carefully scoped to remain within the boundaries of authorised testing.

Employment law. Results should not be used to take disciplinary action against individuals. This is both an ethical best practice and a way to avoid potential employment disputes.

A reputable testing provider will expect to see written authorisation and will raise concerns if it is not forthcoming. Treat any provider who does not ask for it as a red flag.

Stakeholder Communication

Before the Test

Brief a small, need-to-know group: typically the CISO or security lead, the SPOC, legal counsel, and the DPO. Decide whether management or HR need advance notice, particularly for physical or vishing tests that could cause alarm. Agree the communication plan for when the test concludes. Staff should learn what happened and why.

During the Test

Keep the SPOC available and responsive throughout. If a phishing simulation triggers a genuine incident response, the SPOC must be able to clarify quickly. Log any safety concerns raised by the testing team in real time.

After the Test

Notify all staff that a test took place, what the objectives were, and what the organisation learned at an aggregate level. Do not publish individual results or name specific people. Frame everything around organisational improvement, not individual failure.

Metrics That Matter Beyond Click Rate

Click rate is the most commonly cited metric from phishing simulations, but on its own it tells you very little. A 15% click rate means something very different depending on what happened next.

Reporting rate. What percentage of recipients reported the suspicious message to the security team? A high reporting rate, even alongside a moderate click rate, signals a healthy security culture.

Credential submission rate. Of those who clicked, how many actually entered credentials or sensitive data? This measures the depth of compromise, not just initial engagement.

Time to report and time to organisational response. How quickly did the first report reach the security team, and how long did it take the SOC or IT team to act on it (blocking a domain, sending an all-staff warning)?

Repeat failure rate. Are the same individuals or teams consistently vulnerable across successive tests? This highlights where targeted support is needed.

Physical access success rate. For physical tests, how many access controls were bypassed, and at what point was the tester challenged?

Track these metrics over successive test cycles. Improvement over time is more meaningful than any single data point.

Running Blame-Free Debriefs

The debrief is where testing delivers its real value, or where trust breaks down. A poorly handled debrief makes staff defensive and less likely to report genuine threats in future.

Present aggregated data only. Share departmental or organisational trends, not individual names or click lists.

Focus on systemic causes. If 40% of a department clicked a simulated phishing email, the question is not "who failed?" but "what made this pretext effective, and what controls were missing?"

Include positive findings. Highlight where staff reported promptly, challenged suspicious requests, or followed procedure correctly.

Invite input. Ask staff what made the simulation convincing and what tools or processes would have helped them respond better. Every debrief should produce a clear list of remediation actions with owners and deadlines.

Converting Findings into Measurable Remediation

Findings from social engineering tests should map to specific, trackable actions.

On the people side: deliver targeted coaching to teams or roles with higher vulnerability rather than blanket retraining. Run scenario-based workshops using anonymised examples from the test. Embed social engineering awareness into onboarding for new starters and contractors.

On process: review and update escalation paths for reporting suspicious communications. Strengthen verification procedures for sensitive requests (payment changes, password resets, visitor access). Make sure helpdesk and reception teams have clear protocols for identity verification.

On technology: tune Secure Email Gateways based on the types of simulated emails that bypassed filtering. Implement or enforce DMARC, DKIM, and SPF to reduce domain spoofing risk. Deploy phishing-resistant multi-factor authentication such as FIDO2 security keys, particularly for privileged accounts. Review mobile device management policies to address smishing risks.

Set measurable targets. For example, "increase reporting rate from 12% to 30% within two test cycles." Track progress.

Buyer Checklist: Selecting a Social Engineering Testing Provider

No single factor here is decisive, but together they help distinguish credible firms from those offering a superficial service.

Relevant accreditation. Look for CREST membership or CHECK status, or equivalent recognised certifications for the testers who will deliver the work.

UK legal knowledge. The provider should demonstrate clear understanding of the Computer Misuse Act, UK GDPR, and ICO expectations. They should ask about your authorisation process, not skip it.

Defined safety protocols. Ask for evidence of abort procedures, SPOC requirements, and how they handle situations where a test causes unintended operational impact.

Professional indemnity insurance. Confirm the provider carries adequate cover for the engagement.

Comprehensive reporting. Expect both an executive summary for senior stakeholders and a detailed technical report with evidence, analysis, and prioritised remediation guidance.

Remediation support. The best providers offer post-test workshops or follow-up sessions to help translate findings into action, not just a PDF report.

Ethical approach. Ask how the provider handles individual data, whether they support blame-free debriefs, and how they ensure pretexts remain proportionate.

Transparent pricing. Understand what is included. Costs vary significantly depending on scope and complexity, so make sure you can compare on a like-for-like basis. For broader context on budgeting, see our guide on how much a penetration test should cost.

How Social Engineering Testing Fits the Bigger Picture

Social engineering testing delivers the most value when it is integrated into a broader security programme rather than run as a standalone exercise.

Network and application tests find technical vulnerabilities. Social engineering tests reveal how attackers exploit people to bypass those controls. Together, they give a realistic picture of organisational risk.

Real, anonymised results from social engineering tests also make awareness training more relevant and credible than generic e-learning modules. Staff engage more when they see examples drawn from their own organisation.

Frameworks like ISO 27001 and Cyber Essentials Plus expect organisations to address the human element. Social engineering test results provide evidence of proactive risk management for auditors and boards. And test findings often reveal gaps in detection and escalation that can be addressed through updated playbooks and tabletop exercises.

Run social engineering tests at least annually, and more frequently if your organisation faces elevated risk or has undergone significant change (rapid growth, office moves, new communication platforms). Vary the scenarios and vectors between cycles to avoid predictability.